Alexa Output

Learn more about output templates for Alexa.

Introduction

Jovo offers the ability to create structured output that is then translated into native platform responses.

This structured output is called output template. Its root properties are generic output elements that work across platforms. Learn more about how generic output is translated into an Alexa response below.

{ message: `Hello world! What's your name?`, reprompt: 'Could you tell me your name?', listen: true, }

You can also add platform-specific output to an output template. Learn more about Alexa output below.

{ // ... platforms: { alexa: { // ... } } }

Generic Output Elements

Generic output elements are in the root of the output template and work across platforms. Learn more in the Jovo Output docs.

Below, you can find a list of generic output elements that work with Alexa:

message

The generic message element is what Alexa is saying to the user:

{ message: 'Hello world!', }

Under the hood, Jovo translates the message into an outputSpeech object (see the official Alexa docs):

{ "outputSpeech": { "type": "SSML", "ssml": "<speak>Hello world!</speak>" } }

The outputSpeech response format is limited to 8,000 characters. By default, Jovo output trims the content of the message property to that length. Learn more in the output sanitization documentation.

reprompt

The generic reprompt element is used to ask again if the user does not respond to a prompt after a few seconds:

{ message: `Hello world! What's your name?`, reprompt: 'Could you tell me your name?', }

Under the hood, Jovo translates the reprompt into an outputSpeech object (see the official Alexa docs) inside reprompt:

{ "reprompt": { "outputSpeech": { "type": "SSML", "ssml": "<speak>Could you tell me your name?</speak>" } } }

listen

The listen element determines if Alexa should keep the microphone open and wait for a user's response.

By default (if you don't specify it otherwise in the template), listen is set to true. If you want to close a session after a response, you need to set it to false:

{ message: `Goodbye!`, listen: false, }

Under the hood, Jovo translates listen: false to "shouldEndSession": true in the JSON response.

The listen element can also be used to add dynamic entities for Alexa. Learn more in the $entities documentation.

quickReplies

Alexa does not natively support quick replies. However, when used together with a card or carousel, Jovo automatically turns the generic quickReplies element into buttons for APL:

{ // ... quickReplies: [ { text: 'Button A', intent: 'ButtonAIntent', }, ]; }

For this to work, you need to enable APL for your Alexa Skill project. Also, genericOutputToApl needs to be enabled in the Alexa output configuration, which is the default.

If a user taps on a button, a request of the type Alexa.Presentation.APL.UserEvent is sent to your app. You can learn more in the official Alexa docs. To map this type of request to an intent (and optionally an entity), you need to add the following to each quick reply item:

{ // ... quickReplies: [ { text: 'Button A', intent: 'ButtonIntent', entities: { button: { value: 'a', }, }, }, ]; }

This will add the intent and entities properties to the APL document as arguments.

"arguments": [ { "type": "QuickReply", "intent": "ButtonIntent", "entities": { "button": { "value": "a" } } } ]

These are then mapped correctly and added to the $input object when the user taps a button.

In your handler, you can then access the entities like this:

@Intents(['ButtonIntent']) showSelectedButton() { const button = this.$entities.button.value; // ... }

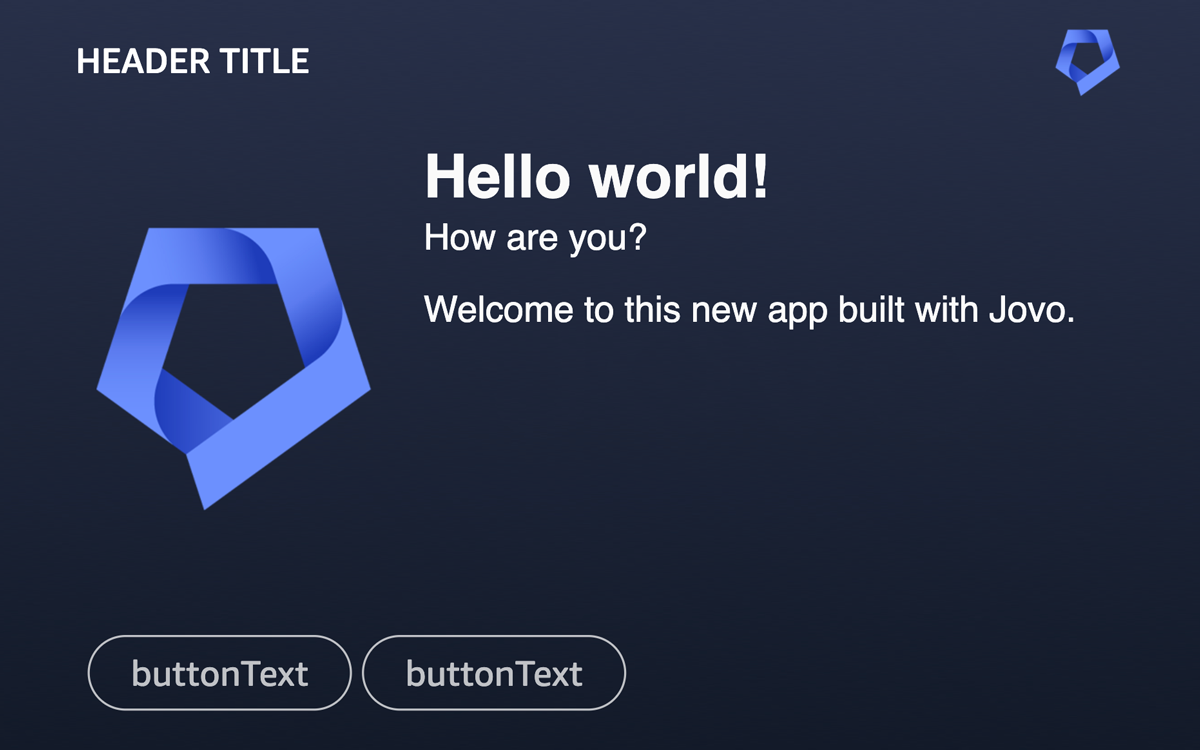

card

Jovo automatically turns the generic card element into a detail screen for APL.

For example, the image above (screenshot from the testing tab in the Alexa Developer Console) is created by the following output template:

{ // ... card: { title: 'Hello world!', subtitle: 'How are you?', content: 'Welcome to this new app built with Jovo.', imageUrl: 'https://jovo-assets.s3.amazonaws.com/jovo-icon.png', // Alexa properties backgroundImageUrl: 'https://jovo-assets.s3.amazonaws.com/jovo-bg.png', header: { logo: 'https://jovo-assets.s3.amazonaws.com/jovo-icon.png', title: 'Header title', }, }, }

Besides the generic card properties, you can add the following optional elements for Alexa:

backgroundImageUrl: A link to a background image. This is used asbackgroundImageSourcefor theAlexaBackgroundAPL property.header: Contains alogoimage URL and atitleto be displayed at the top bar of the APL screen. These are used asheaderAttributionImageandheaderTitlefor theAlexaHeaderAPL property.

You can also add buttons by using the quickReplies property.

For cards to work, you need to enable APL for your Alexa Skill project. Also, genericOutputToApl needs to be enabled in the Alexa output configuration, which is the default. You can also override the default APL template used for card:

const app = new App({ // ... plugins: [ new AlexaPlatform({ output: { aplTemplates: { card: CARD_APL, // Add imported document here }, }, }), ], });

Note: If you want to send a home card to the Alexa mobile app instead, we recommend using the nativeResponse property.

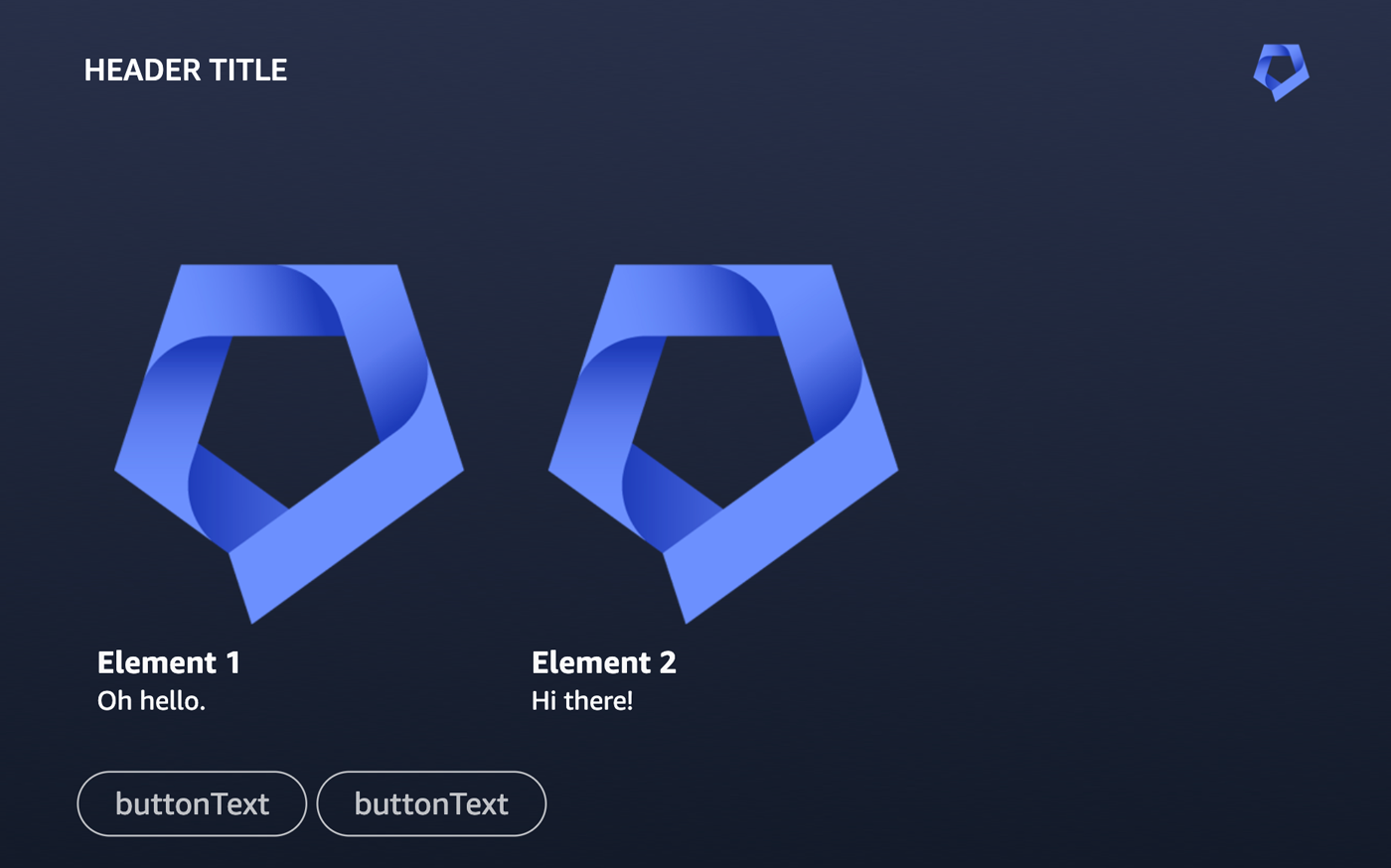

carousel

Alexa does not natively support carousels. However, Jovo automatically turns the generic carousel element into a card slider for APL.

For example, the image above (screenshot from the testing tab in the Alexa Developer Console) is created by the following output template:

{ // ... carousel: { items: [ { title: 'Element 1', content: 'Oh hello.', imageUrl: 'https://jovo-assets.s3.amazonaws.com/jovo-icon.png', }, { title: 'Element 2', content: 'Hi there!', imageUrl: 'https://jovo-assets.s3.amazonaws.com/jovo-icon.png', }, ], // Alexa properties backgroundImageUrl: 'https://jovo-assets.s3.amazonaws.com/jovo-bg.png', header: { logo: 'https://jovo-assets.s3.amazonaws.com/jovo-icon.png', title: 'Header title', }, }, }

Besides the generic carousel properties, you can add the following optional elements for Alexa:

backgroundImageUrl: A link to a background image. This is used asbackgroundImageSourcefor theAlexaBackgroundAPL property.header: Contains alogoimage URL and atitleto be displayed at the top bar of the APL screen. These are used asheaderAttributionImageandheaderTitlefor theAlexaHeaderAPL property.

You can also add buttons by using the quickReplies property.

For the carousel to work, you need to enable APL for your Alexa Skill project. Also, genericOutputToApl needs to be enabled in the Alexa output configuration, which is the default. You can also override the default APL template used for carousel:

const app = new App({ // ... plugins: [ new AlexaPlatform({ output: { aplTemplates: { carousel: CAROUSEL_APL, // Add imported document here }, }, }), ], });

You can make the carousel clickable by adding a selection object. Once an element is selected by the user, a request of the type Alexa.Presentation.APL.UserEvent is sent to your app. You can learn more in the official Alexa docs. To map this type of request to an intent (and optionally an entity), you need to add the following to each carousel item:

{ // ... carousel: { items: [ { title: 'Element A', content: 'To my right, you will see element B.', selection: { intent: 'ElementIntent', entities: { element: { value: 'A', }, }, }, }, { title: 'Element B', content: 'Hi there!', selection: { intent: 'ElementIntent', entities: { element: { value: 'B', }, }, }, } ] }, }

This will add the intent and entities properties to the APL document as arguments.

"arguments": [ { "type": "Selection", "intent": "ElementIntent", "entities": { "element": { "value": "A" } } } ]

These are then mapped correctly and added to the $input object when the user taps a button. In the example above, a tap on an element triggers the ElementIntent and contains an entity of the name element.

In your handler, you can then access the entities like this:

@Intents(['ElementIntent']) showSelectedElement() { const element = this.$entities.element.value; // ... }

Alexa Output Elements

It is possible to add platform-specific output elements to an output template. Learn more in the Jovo output documentation.

For Alexa, you can add output elements inside an alexa object:

{ // ... platforms: { alexa: { // ... } } }

Native Response

The nativeResponse property allows you to add native elements exactly how they would be added to the Alexa JSON response.

{ // ... platforms: { alexa: { nativeResponse: { // ... } } } }

For example, an APL RenderDocument directive (see official Alexa docs) could be added like this:

{ // ... platforms: { alexa: { nativeResponse: { response: { directives: [ { type: 'Alexa.Presentation.APL.RenderDocument', token: '<some-token>', document: { /* ... */ }, datasources: { /* ... */ }, }, ]; } } } } }

Learn more about the response format in the official Alexa documentation.

APL

The Alexa Presentation Language (APL) allows you to add visual content and audio (using APLA) to your Alexa Skill. Learn more in the official Alexa docs.

You can add an APL RenderDocument directive (see official Alexa docs) to your response by using the nativeResponse property, for example:

{ // ... platforms: { alexa: { nativeResponse: { response: { directives: [ { type: 'Alexa.Presentation.APL.RenderDocument', token: '<some-token>', document: { /* ... */ }, datasources: { /* ... */ }, }, ]; } } } } }

Alternatively you could also use the AplRenderDocumentOutput class provided by Jovo, which will wrap your data in a directive in the response for you:

import { AplRenderDocumentOutput } from '@jovotech/platform-alexa'; // ... someHandler() { // ... return this.$send(AplRenderDocumentOutput, { token: '<some-token>', document: { /* ... */ }, datasources: { /* ... */ }, }); }

Learn more about APL in the following sections:

- APL Configuration: How to enable APL for your Alexa Skill

- APL User Events: How to respond to APL touch input

Jovo also supports the ability to turn output elements into APL templates. Learn more in the sections above:

APL Configuration

To support APL, you need to enable the ALEXA_PRESENTATION_APL interface for your Alexa Skill. You can do so in the Alexa Developer Console, however, we recommend using the Jovo CLI and adding the interface to your the Alexa project configuration using the files property:

new AlexaCli({ files: { 'skill-package/skill.json': { manifest: { apis: { custom: { interfaces: [ { type: 'ALEXA_PRESENTATION_APL', supportedViewports: [ { mode: 'HUB', shape: 'RECTANGLE', minHeight: 600, maxHeight: 1279, minWidth: 1280, maxWidth: 1920, }, // ... ], }, ], }, }, }, }, }, // ... });

You can find all supported APL viewports in the official Alexa docs.

This adds the interface to your skill.json manifest during the build process. To update your Skill project, you can follow these steps:

# Build Alexa project files $ jovo build:platform alexa # Deploy the files to the Alexa Developer Console $ jovo deploy:platform alexa

Learn more about Alexa CLI commands here.

APL User Events

APL also supports touch input for buttons or list selections. If a user taps on an element like this, a request of the type Alexa.Presentation.APL.UserEvent is sent to your app. You can learn more in the official Alexa docs.

Similar to how it works with quick replies and carousel items, you can add the following as arguments to your APL document:

type: Can beSelectionorQuickReply. Required.intent: The intent name the user input should get mapped to. Required.entities: The entities (slots) that should be added to the user input. Optional.

"arguments": [ { "type": "Selection", "intent": "ElementIntent", "entities": { "element": { "value": "A" } } } ]

When a user selects that element, the intent and entities get added to the $input object. This way, you can deal with APL user events in your handlers the same way as with typical intent requests.

In your handler, you can then access the entities like this:

@Intents(['ElementIntent']) showSelectedElement() { const element = this.$entities.element.value; // ... }

Alexa Output Configuration

This is the default output configuration for Alexa:

const app = new App({ // ... plugins: [ new AlexaPlatform({ output: { genericOutputToApl: true, aplTemplates: { carousel: CAROUSEL_APL card: CARD_APL }, }, }), ], });

It includes the following properties:

genericOutputToApl: Determines if generic output likequickReplies,card, andcarouselshould automatically be converted into an APL directive.aplTemplates: Allows the app to override the default APL templates used forcarouselandcard.

Alexa Output Classes

The Alexa integration also offers a variety of convenience output classes that help you return use case specific responses, for example to request permissions from the user. You can find all output classes here.

You can import any of the classes and then use them with the $send() method, for example:

import { AskForPermissionOutput } from '@jovotech/platform-alexa'; // ... someHandler() { // ... return this.$send(AskForPermissionOutput, { /* options */ }); }

Progressive Responses

Alexa offers the ability to send progressive responses, which means you can send an initial response while preparing the final response. This is helpful in cases where you have data intensive tasks (like API calls) and want to give the user a heads up.

To send a progressive response, you can use a convenience output class called ProgressiveResponseOutput:

import { ProgressiveResponseOutput } from '@jovotech/platform-alexa'; // ... async yourHandler() { await this.$send(ProgressiveResponseOutput, { speech: 'Alright, one second.' }); // ... return this.$send('Done'); }